-

American Evaluation Association

-

As a program evaluation practitioner, I have been an active member of the American Evaluation Association (AEA) for many years. This folder contains many of my presentations at the American Evaluation Association in my former position as Associate Professor at the Morgridge College of Education as well as in my previous life as Director of Evaluation and Research at the Mental Health Center of Denver, and current life as Executive Director of the Aurora Research Institute. Please note that I have a different folder for our Propensity scores presentations

-

AEA 2019

-

Statistical analysis that are commonly used in program evaluation and how to conduct them using the free statistical software R (RCommander)

Installing R and RCmdr in your system.pdf

This workshop conducted at Mineappolis in 2019 (AEA 2019) describes in detail how to install R and R-commander (A point-and-click interface) to conduct several of the more common statistical procedures used in program evaluation. This work was conducted with Dr. Priyalatha Govindasamy (Department of Psychology and Counseling, Sultan Idris Education University).

Please note that the file included here is only for the installation of R and R-commander. If you are interested in the full deck, examples and videos, please contact me

-

AEA 2016

-

In this study authored by Dr. Priyalatha Govindasamy, myself and Hanan ALGhamdi, we conducted a multivariate meta-analysis on the correlations of GRE-verbal, quantitative and GPA scores. 13 independent studies with a total of 39 effect sizes were included in the meta-analysis to synthesize the relationships between the student’s academic performances and their GRE scores. Then, the averaged effect sizes were used to fit a multiple regression model. The multiple regression model was used to estimate the influence of GRE-verbal and GRE-Quantitative in predicting student’s GPA.

-

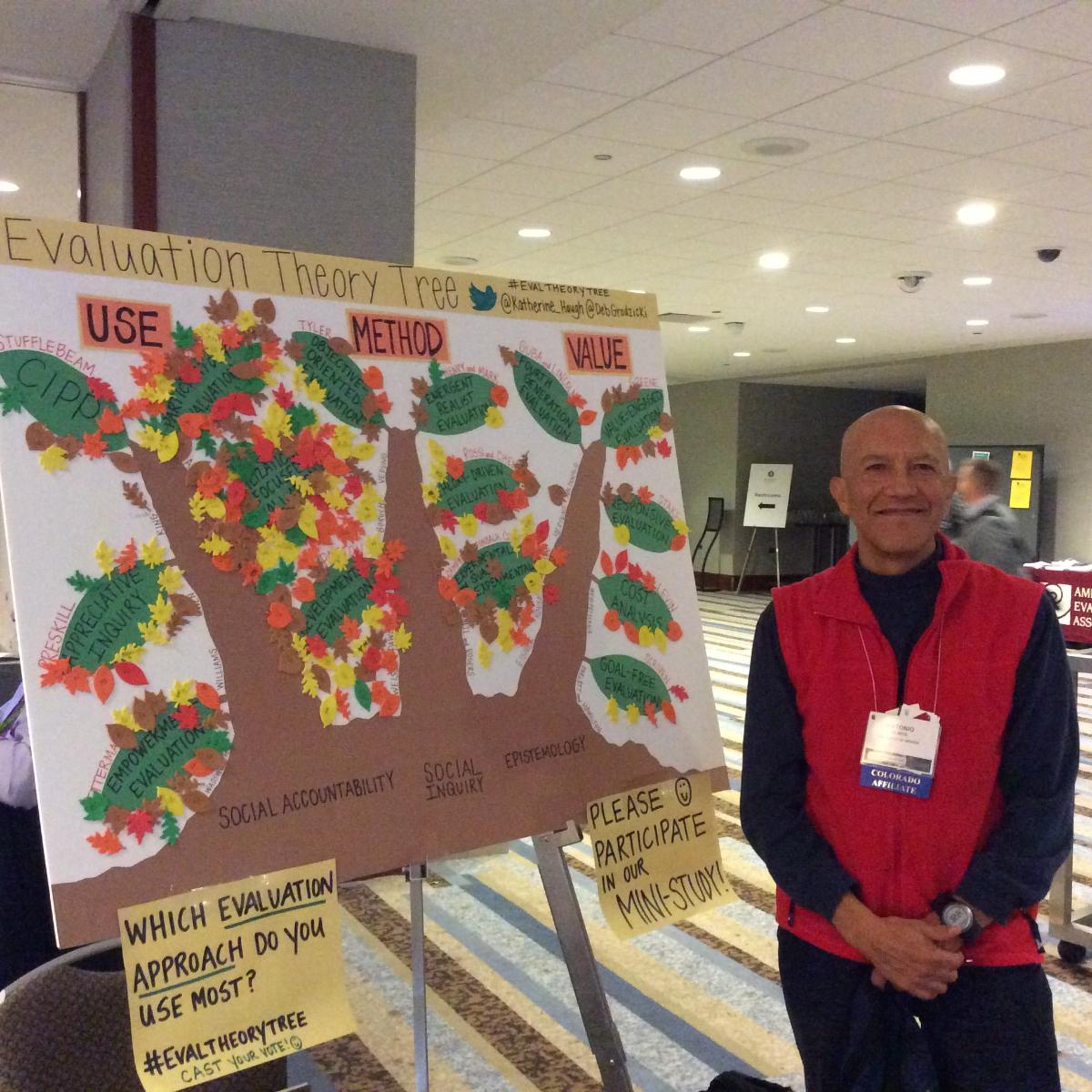

This study authored by Ann Wacker, Maggie Patel and myself, explores how students who were exposed for the first time to program evaluation theorists tend to link information about them. We use the analysis tools from Social Network Analysis (SNA) to explore how novice program evaluators tend to link different theorists. Implications for the refinement of current program evaluation theory and practice, as well as the development of alternative approaches to teaching evaluation are presented.

-

AEA 2013

-

This presentation describes some work done in collaboration with RMS students Cheryl Wink and Leigh Alvarado-Benson, regarding the use of dashboards for displaying information. This work was motivated by my experiences in making outcomes information more useful to program stakeholders

-

This presentation describes some analysis conducted with Dr. Susan Hutchinson and Eric Teman (UNCO) to test whether different software packages were produced the same statistical conclusions. When conducting model fit in SEM models, we found that different software packages will produce slightly different results. This is an unsettling finding, because as practitioners, program evaluators never questioned if the results of the study may be different dependent on what software was used.

-

AEA 2012

-

This presentation describes some work done with Susan Hutchinson (UNCO), Scott Stanley and Galena Rhoades regarding invariance in a longitudinal measure. The study illustrates how a construct can change over time, due to developmental changes, and how those changes may lead to erroneous conclusions, if not taken into account

-

AEA 2010

-

This presentation describes work conducted at MHCD with Cathie McLean and CJ McKinney regarding the use of outcomes analysis in the search for quality improvement. It described our vision about the interplay between outcomes, the development of dashboards and other ways to present the information to stakeholders, and the impact on quality improvement

-

AEA 2009-2001

-

AEA 2001 to 2009

Presentations submitted to AEA as director of research and development at MHCD. most of these presentations were conducted in collaboration with Kate DeRoche (now Lusczakoski), CJ McKinney and other MHCD staff

-

This presentation presents research conducted by CJ McKinney in collaboration with Dr. LaGanga to monitor multiple aspects of mental health recovery using Multivariate Quality Control charts; a technique developed by Dr. McKinney. The presentation includes some prototypes we were testing to monitor these outcomes

-

In this presentation, we describe the development of an instrument used to measure how staff promotes recovery from the point of view of the consumers of mental health services. Dr. DeRoche and I used Rasch analysis to conduct this study

-

This presentation presents work conducted by Kate DeRoche in collaboration with Dr. Prado to develop an instrument intended to measure well being in Youth. The presentation describes some of the early steps in the development of this instrument. This work was conducted in collaboration with Scott Nebel, Jonathan Gunderson, Sarah Shaw and Melanie Parker

-

This presentation demonstrates how we were using analogies to better illustrate some of the statistical and psychometric terms we were using in the development of better outcomes. It provides some suggestions for how to present difficult concepts in non-statistical, non-threatening terms

-

This is an update on the program evaluation of the program: "Fortaleciendo la Comunidad" conducted by Dr. Kate DeRoche, Dr. Prado, Hollie Granado and Sarah Shaw on an HIV program with strong collaboration from several community partners

-

We present a description of some models developed by Kate DeRoche and CJ McKinney using Latent Growth Model techniques.

-

Utilizing structural equation modeling (SEM), the current study explores the relationships among five areas regarding consumers’ perceptions of mental health recovery; including hope, symptom interference, social networks, active growth/orientation, and perceived safety.

-

Participation poster 2007 FINAL.pdf

Poster describing the perception of clinical staff (case managers, therapists) regarding participation in services by Consumers of mental health

-

Demonstration on the use of Hierarchical Linear Models (HLM) in Evaluation

-

AEA-07_Recovery_Resiliency.pdf

Preliminary results on the development of instruments to measure recovery and resiliency for children and youth within Systems of Care

-

AEA2006_BuildingEvaluationCapacityCommunitiesCol

This presentation describes some of the lessons we learned as we developed culturally competent evaluations with communities of color

-

AEA2006_Psychometric-final.pdf

In this presentation we describe details about the Recovery Instruments developed at MHCD from both Classical Test (CT) as well as Item Response (IRT) theories based on data collected from approximately 3500 consumers for about 1 year.

-

Using a severity index recently developed (Olmos et al., 2003), monthly data was collected from approximately 500 consumers over a period of 3 years, and then used to evaluate improvement over time using HLM

-

This presentation describes the development of a severity index using Thurstone's method of paired comparisons. The results of this instrument was then used to run a regression discontinuity between individuals receiving Assertive Community Treatment (ACT) services versus individuals in a control group.

-

AEA2002_instrumentEquivalence.pdf

This presentation describes some preliminary analysis Dr. Susan Hutchinson (UNCO) and I conducted to test whether an instrument used by the State of Colorado to assess problem severity. We found that the instrument did not work the same for different ethnic groups